Why grounding AI in proprietary knowledge is the decisive competitive lever for 2025 and beyond

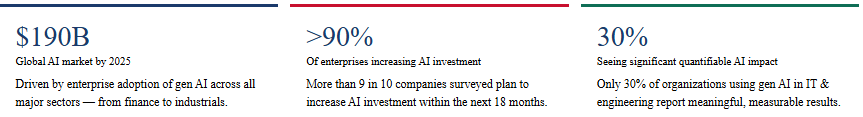

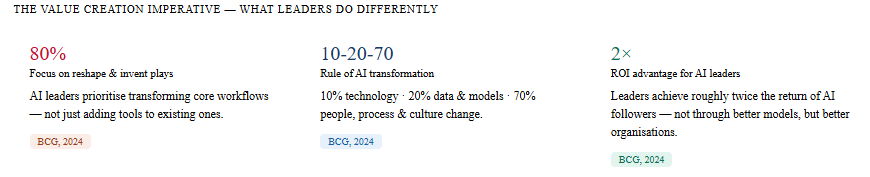

The promise of large language models (LLMs) has captivated enterprise leaders across every sector. Yet the gap between organizations generating genuine competitive advantage from AI and those still cycling through pilots remains wide. The reason, consistently, is not the quality of the model it is the absence of enterprise-specific knowledge. Retrieval-augmented generation (RAG) closes that gap. It is, for 2025, the single most important architectural pattern for deploying AI at enterprise scale.

What RAG is and why it matters

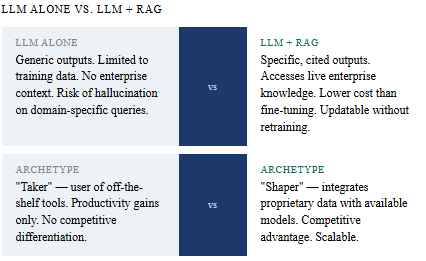

Standard LLMs are trained on vast but generic corpora. Deployed off-the-shelf, they lack access to an organization's proprietary policies, client records, technical manuals, or regulatory filings. The result is outputs that are fluent but insufficiently specific a fundamental limitation in high-stakes enterprise contexts.

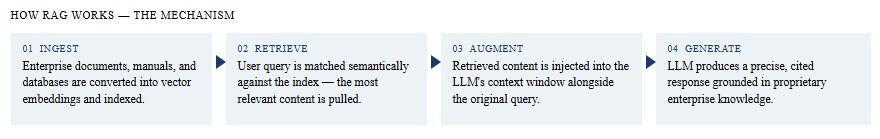

RAG addresses this by extending the LLM's knowledge at inference time. Rather than retraining or fine-tuning the model both costly and slow RAG queries a curated, up-to-date enterprise knowledge base, retrieves the most semantically relevant content, and injects it into the model's context before generating a response. Critically, outputs include source citations, enabling auditability and trust. As McKinsey observes, RAG delivers many of the benefits of a custom-built LLM at a fraction of the cost.

"RAG allows LLMs to access and reference information outside their own training data — and, crucially, with citations included — delivering some of the benefits of a custom LLM at considerably less expense." — McKinsey & Company

Where value is being captured

.

Knowledge management: Engineers and analysts query decades of internal research, patents, and technical standards in seconds compressing innovation cycles.

Customer service: AI agents grounded in live product and policy data resolve queries with specificity, reducing average handling time by 18–25% in documented deployments (BCG, 2024).

Regulatory compliance: Legal and compliance teams cross-reference evolving multi-jurisdictional requirements against internal documentation in real time.

Field force & maintenance: Technicians receive step-by-step guidance drawn from live equipment manuals and fault histories, improving first-time fix rates and reducing downtime.

R&D acceleration: Researchers surface relevant prior work, identify failure modes, and generate hypotheses at a pace impossible through conventional search.

The strategic framing: takers, shapers, makers

McKinsey's taxonomy of AI archetypes provides a useful lens. 'Takers' use off-the-shelf tools as-is useful for productivity, insufficient for competitive differentiation. 'Makers' build foundation models from scratch prohibitively expensive for most enterprises. The optimal position for the vast majority of organizations is 'shaper': integrating proprietary data with available models via RAG to create enterprise-specific AI capabilities that competitors cannot easily replicate.

This framing carries a direct strategic implication. The competitive moat in the AI era is not access to the best model models are increasingly commoditized. It is the quality, depth, and structure of the enterprise knowledge base that feeds the model. Organizations that invest now in curating, indexing, and maintaining proprietary knowledge assets will compound their advantage as model capabilities continue to improve.

The strategic takeaway

The organizations that will lead in the AI era are not those with access to the largest models. They are those that build the richest, most structured proprietary knowledge assets and deploy RAG to make that knowledge continuously accessible, auditable, and actionable across the enterprise. The technology is proven. The architecture is established. The constraint, as it has always been, is organizational will and execution discipline.

Industry leaders should move beyond the question of whether to deploy RAG and focus instead on which knowledge domains to prioritize first, how to govern outputs responsibly, and how to build the organizational capabilities to sustain and scale what they build.

At LINO Consulting & Research GmbH, we help organizations transition from From Hype to Enterprise Value .

We work with companies to:

Design long-term growth and international expansion strategies

Build scalable fan monetization and digital engagement models

Structure media rights, platforms, and content strategies

Support ownership transitions, valuations, and acquisition due diligence

Restructure costs and operations to improve financial resilience

Our focus is not on chasing short-term peaks, but on building repeatable, durable business models that attract capital, retain fans, and perform across cycles.

Sources:

McKinsey & Company — What is RAG? (2024) | A Generative AI Reset (2024) | Enterprise Technology's Next Chapter (2024)

Boston Consulting Group — Value Creation with AI (December 2024) | What is RAG?

Ernst & Young — Generative AI Risk and Governance (2024)